File Operations

+ + +Code Editing

+ + +Project Management

+ + +MCP Integration

+ + +File Operations

+ + +Code Editing

+ + +Project Management

+ + +MCP Integration

+ + +File Operations

+ + +Code Editing

+ + +Project Management

+ + +MCP Integration

+ + +File Operations

+ + +Code Editing

+ + +Project Management

+ + +MCP Integration

+ + +Metric: %{x}

Score: %{y}

Metric: %{x}

Score: %{y}

Metric: %{x}

Score: %{y}

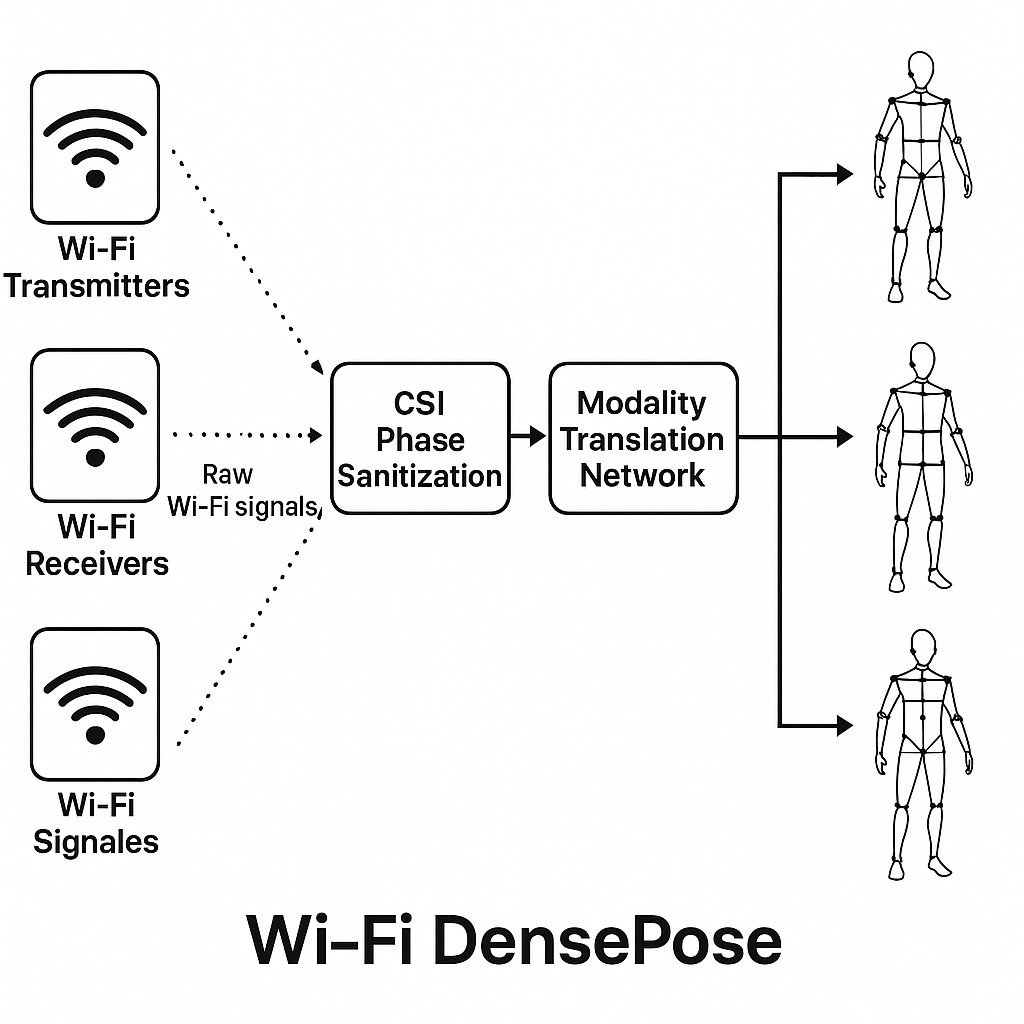

WiFi DensePose

+Human Tracking Through Walls Using WiFi Signals

+Revolutionary WiFi-Based Human Pose Detection

++ AI can track your full-body movement through walls using just WiFi signals. + Researchers at Carnegie Mellon have trained a neural network to turn basic WiFi + signals into detailed wireframe models of human bodies. +

+ +Through Walls

+Works through solid barriers with no line of sight required

+Privacy-Preserving

+No cameras or visual recording - just WiFi signal analysis

+Real-Time

+Maps 24 body regions in real-time at 100Hz sampling rate

+Low Cost

+Built using $30 commercial WiFi hardware

+Hardware Configuration

+ +3×3 Antenna Array

+WiFi Configuration

+Real-time CSI Data

+Live Demonstration

+ +WiFi Signal Analysis

+Human Pose Detection

+System Architecture

+ + +

+

+

+ CSI Input

+Channel State Information collected from WiFi antenna array

+Phase Sanitization

+Remove hardware-specific noise and normalize signal phase

+Modality Translation

+Convert WiFi signals to visual representation using CNN

+DensePose-RCNN

+Extract human pose keypoints and body part segmentation

+Wireframe Output

+Generate final human pose wireframe visualization

+Performance Analysis

+ + +

+ WiFi-based (Same Layout)

+Image-based (Reference)

+Advantages & Limitations

+Advantages

+-

+

- Through-wall detection +

- Privacy preserving +

- Lighting independent +

- Low cost hardware +

- Uses existing WiFi +

Limitations

+-

+

- Performance drops in different layouts +

- Requires WiFi-compatible devices +

- Training requires synchronized data +

Real-World Applications

+ +Elderly Care Monitoring

+Monitor elderly individuals for falls or emergencies without invading privacy. Track movement patterns and detect anomalies in daily routines.

+Home Security Systems

+Detect intruders and monitor home security without visible cameras. Track multiple persons and identify suspicious movement patterns.

+Healthcare Patient Monitoring

+Monitor patients in hospitals and care facilities. Track vital signs through movement analysis and detect health emergencies.

+Smart Building Occupancy

+Optimize building energy consumption by tracking occupancy patterns. Control lighting, HVAC, and security systems automatically.

+AR/VR Applications

+Enable full-body tracking for virtual and augmented reality applications without wearing additional sensors or cameras.

+Implementation Considerations

+While WiFi DensePose offers revolutionary capabilities, successful implementation requires careful consideration of environment setup, data privacy regulations, and system calibration for optimal performance.

+